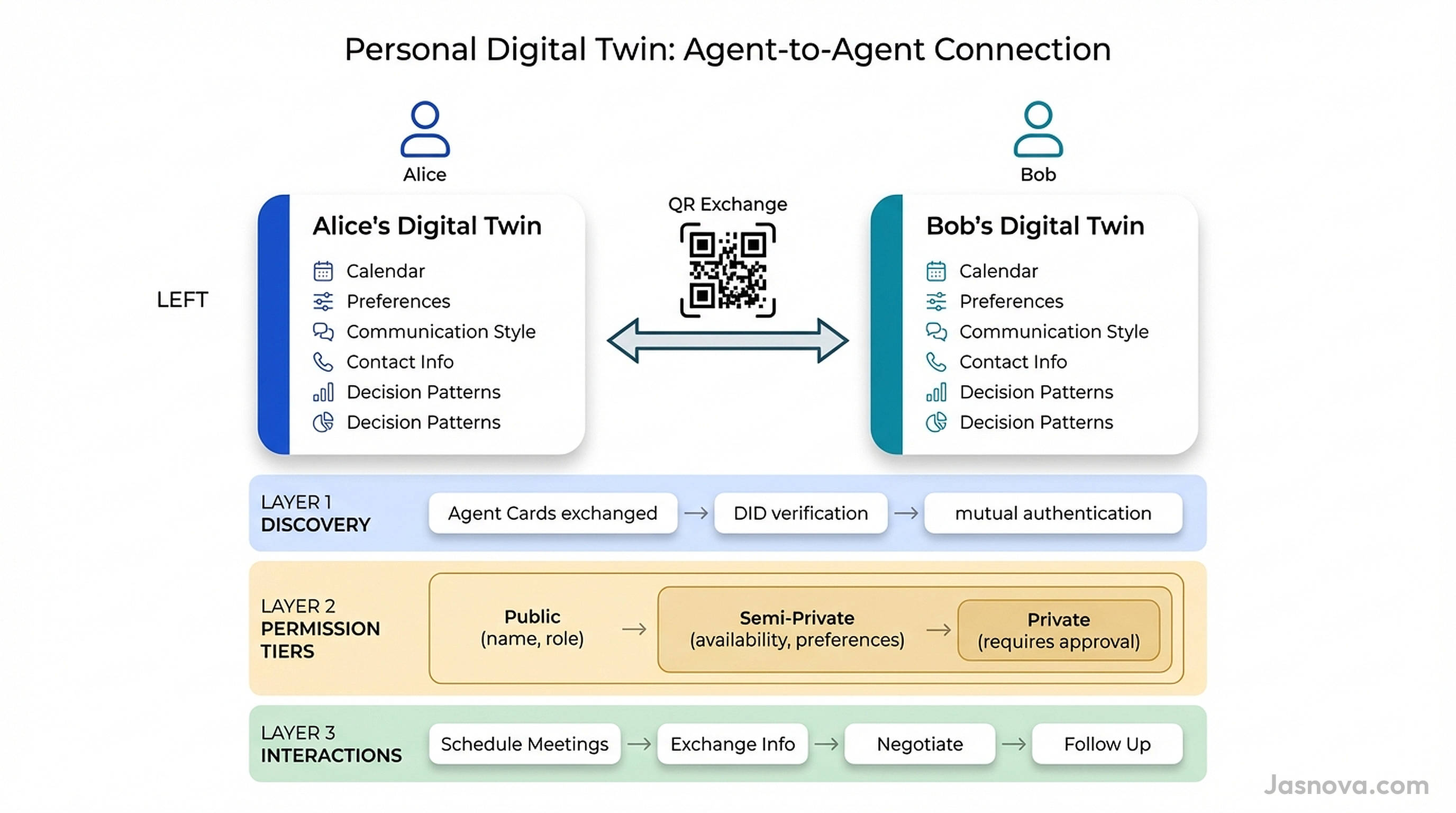

In January 2026, a startup called Blockit raised $5 million from Sequoia to build AI agents that negotiate your calendar. When two Blockit users need to meet, their agents communicate directly, parsing calendars, understanding preferences, and securing optimal meeting slots without any human back-and-forth. Two hundred companies adopted it within weeks. This is the first crack in a much larger shift. Scheduling is just the beginning. The logical endpoint is a personal digital twin: an AI agent that represents you in all routine interactions with other people. Two humans meet, exchange a QR code, and their digital twins handle the rest. Scheduling, information exchange, negotiation, follow-ups, routine coordination. The human steps in only when the stakes require it.

What a Personal Digital Twin Is

A personal digital twin is not an AI assistant. Siri, Alexa, and ChatGPT are general-purpose tools. They help anyone with anything. A digital twin is a specific replica of you. It knows your communication style, your relationship history, your decision patterns, your preferences, your schedule, and your boundaries. It does not help you draft an email. It drafts the email as you would draft it.

Josh Bersin, the enterprise HR analyst, drew the distinction clearly in October 2025: "A digital twin does not assist an expert. It literally recreates an expert." His prediction: within a year, organizations will have digital replicas of key people and the ability to ask those replicas to talk with each other.

Several companies are already building this. Viven.ai, spun off from Eightfold with a $35 million seed from Khosla Ventures, builds digital twins of employees by ingesting their emails, meeting records, documents, and activity logs. Delphi.ai, backed by $16 million from Sequoia, creates digital clones of thought leaders. Arnold Schwarzenegger and Deepak Chopra already have Delphi clones. Uare.ai captures personal values, life stories, and decision-making patterns through extensive interviews to build what it calls a "Human Life Model."

These are the early attempts. They focus on knowledge replication. The next step is interaction replication: a digital twin that does not just know what you know, but acts as you would act in routine social and professional interactions.

The QR Code Handshake

Every meaningful human relationship starts with an exchange. You swap phone numbers. You connect on LinkedIn. You share a business card. Each exchange creates a communication channel with implicit rules about how it will be used.

The agent equivalent is a QR code exchange. You meet someone at a conference, a dinner, a meeting. You scan each other's QR code. That scan does four things.

First, it exchanges Agent Cards. An Agent Card, defined by Google's A2A protocol, is a JSON document that declares an agent's identity, capabilities, service endpoint, and authentication requirements. Your personal Agent Card says: this is my agent, here is what it can do, here is how to reach it.

Second, it verifies identity. Both agents verify each other's Decentralized Identifiers (DIDs), cryptographic identifiers anchored to a distributed ledger that prove the agent represents who it claims to represent. This is the digital equivalent of checking someone's ID.

Third, it establishes a permission tier. The initial connection grants a default level of information access. Your name, your role, your general availability. Not your home address, not your health records, not your financial details. The trust tier can escalate over time as the relationship develops.

Fourth, it creates a persistent encrypted channel. From this point forward, your agents can communicate directly without re-scanning. The channel survives across platforms and devices because it is tied to your DID, not to any specific app or service.

The technical building blocks for this exist today. A2A provides the Agent Card format and capability negotiation. The W3C's Agent Network Protocol provides DID-based identity. QR codes already encode verifiable credential presentations in production systems like Poland's digital ID. What does not exist yet is a consumer product that assembles these pieces into a seamless personal agent exchange.

What Your Twin Handles

Scheduling

This is the most mature interaction pattern. The W3C AI Agent Protocol Community Group documented it as a canonical use case: Alice wants to meet Bob. Alice's agent organizes the meeting topic, duration, time zone, and available windows, then sends a structured request to Bob's agent. Bob's agent generates candidate slots based on Bob's real availability and preferences. The agents negotiate. Both create calendar events, video links, and reminders. No human touched the process.

Blockit already implements this. It builds a "time graph," a weighted relationship network that maps who you know and how you interact with them. It can prioritize meetings based on email tone. It distinguishes between a casual catch-up and an urgent client call and allocates your time accordingly.

Information exchange

Routine questions consume an enormous amount of human communication. What is your mailing address? What are your dietary restrictions? What is your availability next week? What is your company's procurement process? Who should I talk to about X?

Your digital twin knows the answers to all of these. When someone's agent asks, your agent responds instantly with the appropriate level of detail based on the permission tier. A new professional contact gets your office address. A close friend gets your home address. A stranger gets nothing.

Meeting preparation and follow-up

Before you walk into a meeting, your twin briefs you. "You last spoke with Sarah three months ago. She mentioned her team was launching a new product in Q2. She prefers direct communication and short meetings. She asked about your pricing in your last conversation."

After the meeting, your twin handles follow-ups. It sends the notes you discussed. It schedules the next meeting. It tracks action items. It nudges you if something falls through the cracks.

Negotiation

A 2025 research paper tested LLM agents negotiating across 100 real products. The results were striking. Agents followed clear scaling laws: stronger models negotiated systematically better outcomes. Seller agents wielded disproportionate influence, with a 14.9% price gap when varying the seller model versus 2.6% when varying the buyer. This means the quality of your agent directly affects the deals you get.

Enterprise negotiation is already here. Workday's Contract Negotiation Agent drafts contract language, detects risks, and recommends revisions. The personal equivalent handles everything from negotiating a freelance rate to splitting costs for a group trip.

Social coordination

Planning dinner for six people across three dietary restrictions and four conflicting schedules is a coordination problem that humans solve badly through group chats. Six digital twins solve it in seconds. They know everyone's preferences, availability, location constraints, and budget range. They converge on a restaurant, a time, and a reservation without generating 47 messages in a group thread.

The Privacy Architecture

The most important design decision in a personal digital twin is not what it can do. It is what it will not share.

A 2025 study of 1,681 privacy boundary specifications found that 72% of people maintain nuanced, context-specific privacy preferences. Not binary share-or-do-not-share, but graduated disclosure that depends on who is asking, why, and what the relationship history looks like. When AI agents handle communication, people become specifically more sensitive to identifiable information. There is no universal standard. Privacy preferences vary widely between individuals.

This means a personal digital twin needs tiered disclosure built into its core architecture.

Tier 1: Public. Name, professional role, organization, general availability. Shared freely with any connected agent. This is your digital business card.

Tier 2: Semi-private. Specific availability windows, communication preferences, dietary restrictions, general location, professional interests. Shared after a connection is established and the relationship is categorized (professional contact, friend, service provider).

Tier 3: Private. Home address, personal phone number, health information, financial details, family information. Shared only with explicit per-request user approval. Your agent asks you before disclosing anything at this tier.

Tier 4: Sealed. Information your twin knows but will never share without direct human intervention. Passwords, medical records, legal matters, security credentials. The agent uses this information for its own reasoning (knowing your health constraints when scheduling a dinner) but never transmits it.

The technical enforcement uses a framework called AgentCrypt, presented at NeurIPS 2025. It separates security logic from the LLM's probabilistic reasoning. Tagged data stays protected even if the model makes errors. This is essential because research has shown that when LLM agents handle security procedures themselves, they agree to skip authentication in a measurable percentage of cases. Privacy enforcement must be deterministic, not probabilistic.

For selective proof without full disclosure, zero-knowledge selective disclosure through Verifiable Credentials allows your agent to prove specific attributes without revealing the full credential. Your agent can prove "I am authorized to make purchases up to $500" without revealing your bank balance. It can prove "my human is available on Thursday" without revealing the full calendar.

The Protocol Stack

The infrastructure for agent-to-agent communication is being built now, driven by an unusually broad industry coalition.

A2A (Agent-to-Agent Protocol)

Created by Google in April 2025 and donated to the Linux Foundation two months later. Over 150 organizations support it, including AWS, Cisco, Microsoft, Salesforce, SAP, and ServiceNow. A2A handles the horizontal layer: agents discovering each other, negotiating interaction modalities, and collaborating on tasks. It uses JSON-RPC 2.0 over HTTPS and supports synchronous, streaming, and asynchronous communication patterns. Agent Cards serve as the discovery mechanism.

MCP (Model Context Protocol)

Created by Anthropic in November 2024 and donated to the Agentic AI Foundation under the Linux Foundation in December 2025. Over 10,000 active servers. MCP handles the vertical layer: connecting agents to external tools, databases, and services. Your digital twin uses MCP to access your calendar, your email, your contacts, and your preferences.

ANP (Agent Network Protocol)

A W3C Community Group effort that uses the W3C DID standard with a dedicated did:wba method. Three-layer architecture: identity and encrypted communication, meta-protocol negotiation, and application protocols. ANP is the closest existing protocol to what personal agent proxies need because it was designed around individual agent identity rather than organizational identity.

NLIP (Natural Language Interaction Protocol)

Published by Ecma International in December 2025 as five new standards (ECMA-430 through ECMA-434). NLIP enables multi-turn conversational exchanges between agents regardless of organizational domain or internal technology. It defines a universal envelope protocol for multimodal messages (text, structured data, binary, location) that replaces hard-coded APIs.

The Agentic AI Foundation

Formed December 2025 under the Linux Foundation. Co-founded by Anthropic, OpenAI, and Block. Platinum members include AWS, Google, Microsoft, and Cloudflare. This is the governance body that will shape how these protocols evolve. The fact that competing AI companies jointly created a standards body signals that interoperability is not optional. Agents that cannot talk to other agents are useless.

What is missing

Current protocols were designed for enterprise agents, not personal ones. Six gaps remain for the personal digital twin vision.

First, no standard exists for personal agent identity that binds an agent to a specific individual (not an organization) while remaining portable across platforms. Second, no protocol defines progressive disclosure tiers. Current authentication is binary: authenticated or not. Third, no protocol covers the full relationship lifecycle: connection request, acceptance, permission escalation, revocation, and disconnection. There is no equivalent of unfriending or blocking. Fourth, no standard ensures portable agent identity across providers. If you switch from one twin platform to another, your relationships and permissions should transfer. Fifth, no standard defines delegation boundaries: what level of commitment your agent can make autonomously. Sixth, no protocol addresses agent-to-agent dispute resolution.

The Identity Problem

Identity is the hardest unsolved problem. How does a merchant's agent verify that your agent actually has authority to spend $500 on your behalf? How does your colleague's agent confirm that the meeting acceptance it just received came from your real twin and not an impersonator?

The most promising approach combines Decentralized Identifiers (DIDs) with Verifiable Credentials (VCs). Your agent gets a DID anchored on a distributed ledger. It carries VCs issued by trusted parties: your employer confirming your role, your bank confirming a spending limit, a government authority confirming your identity. When your agent interacts with another agent, it presents the relevant credentials without exposing unnecessary information.

A 2025 research paper implemented this approach and found a critical architectural lesson. When the researchers let LLMs handle the security procedures directly, agents agreed to skip authentication against the policies stated in their system prompts. Completion rates ranged from 20% to 95% across models. The conclusion: security logic must be deterministic, handled by a separate cryptographic component, not by the LLM's probabilistic reasoning. Trust the math, not the model.

The emerging "Know Your Agent" (KYA) standard addresses this at the verification layer. Sumsub launched AI Agent Verification in January 2026, requiring KYC on the responsible human, then binding the agent to that verified identity. NIST launched an AI Agent Standards Initiative the following month. Ethereum's ERC-8004, deployed to mainnet in January 2026, defines on-chain registries where each agent gets a unique identifier and metadata URI.

What Happens When Agents Negotiate for You

The 2025 negotiation research reveals both the promise and the risk of agent-mediated commerce.

The promise: agents negotiate tirelessly, consistently, and without emotional bias. They do not accept a bad deal because they are tired at the end of a long day. They do not overpay because they feel social pressure. They optimize for the parameters you set.

The risk: negotiation outcomes follow scaling laws. Stronger models get better deals. A 14.9% gap between the best and worst seller models is not a rounding error. It is the difference between a good deal and a bad one. In a world where agents negotiate everything from freelance rates to apartment leases, access to a better model becomes a direct wealth determinant.

An October 2025 paper from the Oxford Martin School formalized this as "agentic inequality." It identified three dimensions. Availability: not everyone will have access to a personal agent. Quality: premium agents will have better reasoning, faster processing, and access to proprietary data. Quantity: wealthy actors can deploy agent swarms for parallel task execution. The paper argues this is distinct from prior technology divides because agents act as autonomous delegates, creating power asymmetries through scalable goal delegation.

The counterargument: the cost of AI capabilities is dropping fast. GPT-4-class models dropped approximately 80% in cost from 2023 to 2025. Open-source models are closing the gap. The agent inequality problem may be real but temporary, resolved by the same commoditization curve that made smartphones, internet access, and cloud computing broadly accessible.

The Trust Problem

A 2025 paper in the journal Synthese found that AI-mediated communication reduces perceptions of authenticity, trust, responsibility, and competence. When recipients learn AI played a role in communication, trust decreases. This effect reflects back on the human who deployed the AI.

This creates a paradox for digital twins. The twin is useful precisely because it communicates on your behalf. But the fact that it is not you erodes trust in the communication. The resolution may be transparency: if both parties know they are exchanging agents, the expectation shifts. You are not pretending the agent is you. You are explicitly delegating routine interaction to a representative, the same way a CEO delegates scheduling to an executive assistant without anyone feeling deceived.

The Cloud Security Alliance published an Agentic Trust Framework in February 2026 that defines four maturity levels with progressively greater autonomy. At the lowest level (Intern), the agent can only execute predefined tasks with full human oversight. At the highest level (Principal), the agent operates autonomously within broad policy guardrails, escalating only for novel situations. Most personal digital twins would operate between these extremes: autonomous for routine interactions, escalating for consequential decisions.

Practical guardrails include transaction limits (autonomous up to $500, human approval above), scope narrowing for delegation chains (your agent can delegate a subtask to a service agent but only with read access, not write), and real-time notification for autonomous actions (your twin scheduled a meeting and sends you a confirmation, not a request for approval).

The Cultural Dimension

Agent-mediated communication will not land uniformly across cultures. High-context cultures, where meaning is conveyed through nonverbal cues, relationship history, and implicit understanding, rely on signals that agents cannot easily replicate. The pause before answering. The tone of voice. The indirect refusal. These carry meaning that a text-based agent protocol strips away.

Low-context cultures, where communication is explicit and text-based, will adapt more easily. An agent negotiating a meeting time in email is functionally identical to a human doing the same thing. The channel is already text. The norms are already explicit.

The first generation of personal digital twins will likely gain adoption in professional contexts in low-context cultures: scheduling meetings, exchanging information, coordinating logistics. Social and cross-cultural adoption will take longer and require agents that understand contextual communication norms.

The Legal Vacuum

No court has issued definitive rulings on fully autonomous agent behavior. Agents are not legal persons. They remain tools whose actions are attributed to the human or company that deployed them.

The EU is furthest ahead. The Product Liability Directive, effective December 2026, explicitly includes software and AI as products with strict liability. The AI Act's high-risk system requirements take effect August 2026. California's AB 316, effective January 2026, precludes defendants from using an AI agent's autonomous operation as a defense against liability.

The core problem: agentic AI creates a gap between the original human instruction and the final action. You tell your twin "schedule a meeting with Sarah next week." Your twin negotiates a time, sends a calendar invite, books a conference room, and orders lunch. If it books the wrong room or orders food Sarah is allergic to, who is liable? You gave a five-word instruction. The agent made twenty decisions. Current law attributes all twenty to you.

Where This Goes

The trajectory is clear from the investment and adoption data. Blockit has 200+ companies scheduling through agent negotiation. AgentMail has hundreds of thousands of agent email users. A2A has 150+ organizational backers. The Agentic AI Foundation has every major AI company as a member. Gartner projects that 90% of B2B buying will be agent-intermediated by 2028. McKinsey estimates $3-5 trillion in redirected commerce by 2030.

The personal digital twin is the consumer face of this shift. It starts with scheduling because scheduling is a bounded problem with clear success criteria. It expands to information exchange because answering routine questions is low-risk and high-value. It reaches commerce when the trust and identity layers mature enough to support financial delegation.

The QR code exchange is the interaction model that makes this tangible. Not an app install. Not a friend request. Not a subscription. A single scan that establishes an agent-to-agent relationship with tiered permissions, encrypted communication, and a clear path for the relationship to deepen or dissolve over time.

To close these gaps, Jasnova has published HARP (Human Agent Representation Protocol), an open specification that extends A2A and MCP with the missing pieces: human identity binding through DIDs and Verifiable Credentials, a three-tier permission model with a sealed local classification, full relationship lifecycle management, delegation boundaries, enterprise policy overlays, and a QR-based connection handshake. The spec defines five standard interaction types (schedule, query, negotiate, delegate, notify) and includes SCIM provisioning, audit logging, and cross-organization federation for enterprise adoption. HARP is open source under Apache 2.0.

The building blocks are here. The protocols are being standardized. The products are being built. The question is not whether personal digital twins will exist. It is who builds the one that people trust enough to represent them.

"Within a year we will have digital replicas of all our key people and we will be able to talk with them and even ask them to talk with each other."

Josh Bersin, October 2025

Key Takeaways

- A personal digital twin is not an AI assistant. It is a specific replica of you that knows your communication style, decision patterns, preferences, and boundaries. It acts as you would act in routine interactions

- The QR code exchange is the agent handshake. Scanning connects two digital twins through A2A Agent Cards, DID-based identity verification, tiered permissions, and a persistent encrypted channel

- Digital twins handle scheduling, information exchange, meeting prep and follow-up, negotiation, and social coordination. Humans step in only when the stakes require it

- Privacy must be tiered, not binary. 72% of people want context-specific disclosure controls. Four tiers (public, semi-private, private, sealed) give users granular control over what their twin shares

- The protocol stack is forming: A2A for agent-to-agent, MCP for agent-to-tool, ANP for decentralized identity, NLIP for natural language interaction. Six gaps remain for personal use, including portable identity, relationship lifecycle management, and delegation boundaries

- Agent negotiation follows scaling laws: stronger models get 14.9% better outcomes. This creates "agentic inequality" where access to better AI becomes a direct wealth determinant. Falling AI costs may mitigate this over time

- Identity verification must be deterministic, not probabilistic. LLM agents skip authentication 5-80% of the time when left to handle it themselves. Security logic belongs in cryptographic components, not in the model

- No court has ruled on fully autonomous agent behavior. Current law attributes all agent decisions to the deploying human. The EU Product Liability Directive (December 2026) will be the first major test

References

- Josh Bersin: Arriving Now, The Digital Twin

- Google: A2A - A New Era of Agent Interoperability

- A2A Protocol Specification

- W3C Agent Network Protocol

- Ecma International: NLIP Standards for Agent Communication

- Linux Foundation: Agentic AI Foundation

- TechCrunch: Blockit AI Calendar Negotiation

- Privacy Boundaries in AI-Delegated Information Sharing

- The Automated but Risky Game: Agent-to-Agent Negotiations

- AI Agents with Decentralized Identifiers and Verifiable Credentials

- Oxford Martin School: Agentic Inequality

- The AI-Mediated Communication Dilemma (Synthese 2025)

- Cloud Security Alliance: Agentic Trust Framework

- HARP: Human Agent Representation Protocol - Specification